Do you know why you send your marketing emails in the mornings, why your CTA buttons are styled in a certain way, or why you format your subject lines with personalization? You may have a hunch or may have read an article that made a claim about best practices for email marketing, and you decided to apply that to your own emails. But do you have the data to support that claim for your audience and marketing?

Enter A/B testing. If you have a question or hypothesis about what might perform better in your marketing campaigns, test it! And regardless of which marketing automation platform you’re using (Marketo, HubSpot, Salesforce Marketing Cloud Account Engagement (MCAE), etc.), you’ll always want to incorporate A/B testing!

What is A/B testing?

A/B testing is where marketing gets scientific. An A/B test is a randomized experiment that compares two (or more) variants and evaluates the success or performance of a single variable. To put it simply – A/B testing is how you compare two assets and figure out which one performs better. In this article, we’ll explore the A/B testing features in Marketo Engage and various things to consider when determining which approach may be best for your marketing operations team.

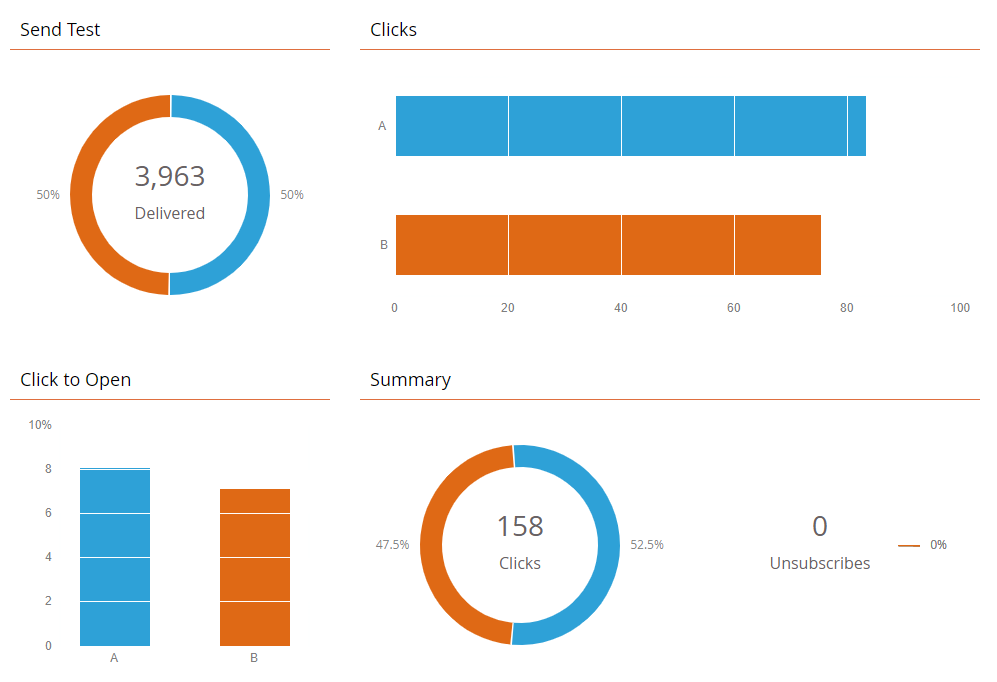

Below is a graphic of some key metrics Marketo will provide upon completion of an A/B test. Your team should be well aware of these metrics, along with many others.

In addition to clicks, opens, delivers, and unsubscribes, an Email Performance Report will provide the following metrics: % Delivered, hard bounced, soft bounced, opened, % opened, %clicked, clicked-to-open ratio, and % unsubscribed. Whether you’re a seasoned email marketing professional or new to the industry, understanding these metrics will be necessary when conducting and analyzing your own A/B tests.

On the surface, this is a very straightforward process. However, in order to get clear results from the test, your marketing operations team should follow these A/B testing best practices.

Do not test without a hypothesis.

When you’re getting an A/B test spun up, you absolutely must have a hypothesis – aka a theory or question you wish to explore. A good hypothesis will be clear, focused, and easily testable. Some examples of good hypotheses for A/B testing emails are:

- Sending our newsletter at 6 AM on Monday morning will result in a higher click-to-open ratio.

- Changing the button color to green will result in a higher click percentage.

- Including the recipient’s first name in the subject line will result in a higher open rate.

Do not test more than one variable at a time.

If you are A/B testing an email, there should only be one element of that email that differs between the test variants. You can test anything – from subject lines, to time sent, to audiences, to button color – but you should only ever include one of those variables in a single A/B test. Otherwise, you risk skewing the results and not being able to come to a conclusive decision on what change impacted the results of the test.

Do not test with a small sample size.

This can be a little fuzzy, as “small sample size” can be different across different scenarios. Generally for emails, we recommend at minimum a total audience of 1,000 records. You can also use a sample size calculator, like this one from Optimizely, to get a more definite answer on how many records you’ll need in your sample to get results with the level of certainty you’re comfortable with. The more records you can test against, the better. If you test against a small sample size, you will still get results, they just may not be statistically significant enough to come to a conclusion. If you’re consistently finding that your A/B tests are yielding results that aren’t statistically significant, consider sending your tests to larger sample sizes.

Evaluate results for statistical significance.

If your test results aren’t statistically significant, you can’t draw any meaningful conclusion from the test. A quick Google search of “A/B testing statistical significance calculator” will give you a number of helpful online tools for evaluating this metric for your A/B tests. I personally recommend Neil Patel’s statistical significance calculator as it simplifies the results into a format that is easy to understand, whether you have knowledge of statistics or not. Knowing whether your results are statistically significant, and the confidence rate that the changes made will continue to provide consistent results, will help you make informed, data-driven decisions. Making objective based decisions (i.e. using the data), as opposed to subjective, should always be at the forefront of your campaign planning and execution process.

Plan for what to do with the results.

A/B testing will provide valuable data. Your job will be to review this data and use it to inform any changes you might want to make based on the results from your test. Depending on the nature of your test, you might adjust anything from your email design, to the voice your emails are written in, to the time of day you send emails.

Continually test.

A/B testing should be an ongoing effort. Don’t stop testing because you got a positive result on one test. Email marketing is fluid; people’s habits and motivations will shift. Continuing to test different variables, even when you think you have an answer to your hypothesis, will continue to add value to your results and either further back up your hypothesis, or introduce more questions you might want to test against. And what’s great about using an email automation platform like Marketo is that continually conducting various A/B tests can be very simple to implement!

A/B testing is a great step to take if you want to level up your email marketing strategy. If you’ve ever found yourself wondering about ways to improve your email metrics, A/B testing is the place to start collecting data that can inform future strategies with data-driven decision making.

How to set up A/B tests in Marketo

Now that you know the ins and outs of A/B testing best practices, you might be wondering, “how do I set up an A/B test in Marketo?” Great question. There are a few different ways you can go about this, with use cases for each.

Set up an A/B test within an email program.

This is likely the approach you will see used the most often. It is for a one-time A/B test of an email that will be sent using an email program.

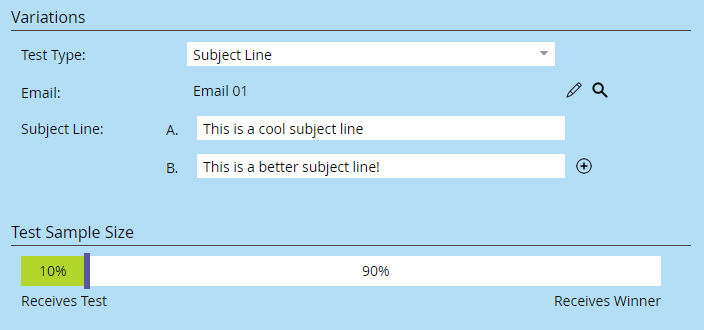

This approach allows you to A/B test subject lines, whole emails, from address, and date/time. It allows you to set your sample size and break up the send so either all receive a 50/50 split (sample size set to 100%), or a portion of the audience can receive the test, but the rest will receive the winner at minimum 4 hours later based on predetermined win rules. Winners with this approach can be declared manually or automatically (with the exception of date/time, which must be declared manually).

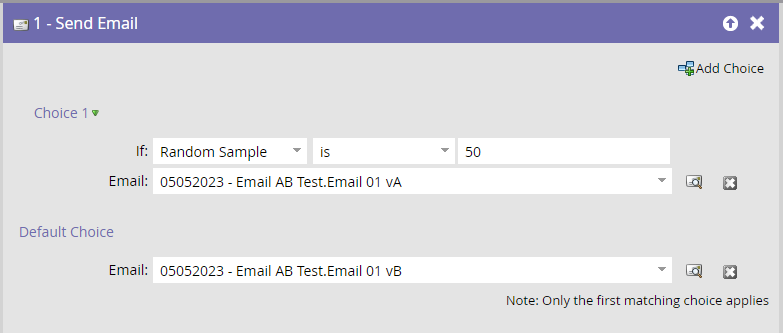

Set up an A/B test with a random sample in a smart campaign flow.

This approach is a one-time test using two separate email assets sent via a smart campaign flow step, which can come in handy for reporting purposes, as the other A/B test approaches combine the test assets into one asset in reports.

With this approach, you can test any variable since it is a whole email test. However, you cannot break up the send like you can with an A/B test from an email program – the test will send once. You will also have to review the results manually to decide which test won.

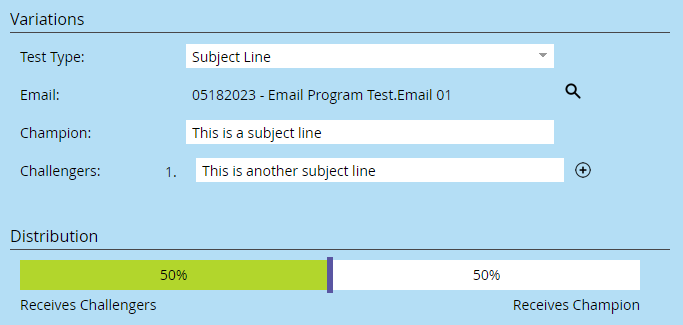

Set up an A/B test with Champion/Challenger in an engagement program.

This approach is an ongoing test that can only be used in triggered campaigns and engagement programs. The test will continue to run until you manually declare a winner, in which case the winning test will be the version that continues to be sent out.

This type of A/B test can test subject lines, from address, or whole emails. Since it is an ongoing test, it cannot test date/time. This method will not work if sent from a batch campaign. Meaning, if you have your engagement programs set up so assets are sent from a smart campaign within a nested program, instead of adding the assets directly to the stream, champion/challenger is not going to work since that smart campaign is considered a batch send.

Special Marketo A/B Test Considerations

For any of the above approaches, you will need to ensure that there are no duplicate records in your audience. Otherwise, you risk the chance that a record will receive both the test send and the winning send or both of your variant sends. If your database contains duplicates that you can’t easily remedy before an A/B test, export your audience list and dedupe it manually in Excel or Google Sheets, and then reimport it to your email program as a static list. Whatever your campaign management process looks like, you should always account for duplicates and do all you can to exclude them in your audiences.

Another quirk to keep in mind when A/B testing in Marketo is how an A/B test can change the way assets and programs are referenced. If an email program is referenced in a smart list, and then that email program is updated to include an A/B test, it will change the name of the program (for example, from “2023-05-03-Email-Program” to “2023-05-03-Email-Program.Subject Line Test”) and Marketo does not automatically update the program name where referenced in the smart list. Similarly, when you create an A/B test on an email asset, you will not be able to reference that email asset in smart lists until the email program has been activated. Both of these quirks make it extra important to have a solid QA process before launching an email that includes an A/B test.

You’ve begun A/B testing but don’t know what to make of the results?

Check out our A/B testing worksheet to get a breakdown of the key metrics to determine where significant differences in performance may lie. Simply enter the data from an email performance report and a calculation will populate to determine the significance level. This worksheet can be used any time you perform an A/B test and will allow you to make data driven decisions!

As you can see, A/B testing is a simple way to uplevel your email marketing strategy that you can begin right away. You don’t need to be a Marketo power user or an expert at email marketing to run an A/B test, you just need an idea and a plan. Happy A/B testing!